- Released

Anthropic Backlash Over Chinese Labs and Claude Claims

Anthropic says DeepSeek, Moonshot, and MiniMax used fake accounts to distill Claude outputs, prompting backlash over evidence and double standards.

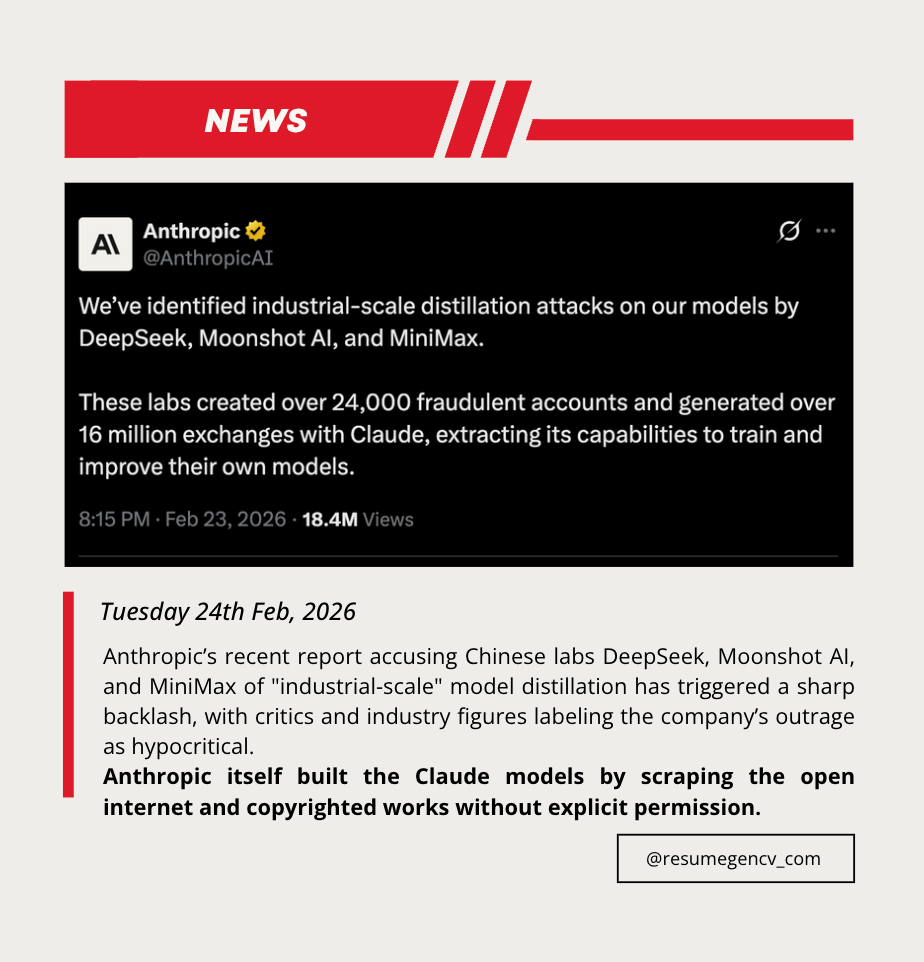

On February 23, 2026, Anthropic said Chinese labs including DeepSeek, Moonshot, and MiniMax used large numbers of fraudulent accounts to extract Claude outputs and improve their own models through distillation.

The claim quickly sparked backlash across developer and AI policy circles, with critics questioning evidence transparency and whether major labs are applying consistent standards to output reuse.

What Anthropic claimed

Anthropic said the activity involved:

- More than 16 million Claude interactions

- Roughly 24,000 fraudulent accounts

- Repeated attempts to capture reasoning and tool-use behavior

The company framed this as industrial-scale policy abuse and a safety risk if copied models remove guardrails.

Why the backlash escalated

Three arguments drove most of the criticism:

-

Transparency gap: Anthropic published high-level numbers, but outside researchers cannot independently verify the full dataset behind the claim.

-

Industry-wide distillation norms: Critics argue distillation is a standard optimization method, so the key dispute is where competitive learning ends and abusive extraction begins.

-

Double-standard concern: Some developers say frontier labs defend broad data use for model training while drawing tighter lines once their own outputs are reused.

The dispute now goes beyond one accusation. It highlights a wider industry problem: policy language around distillation is still vague, while competitive pressure to reuse model outputs is increasing.

Related links

Related news

Mar 07, 2026

•AI

AI Coding Costs Are Rising Faster Than the Value They Create

Chamath Palihapitiya said 8090 moved away from Cursor over token costs, sharpening debate over AI coding bills, Claude Code, agent loops and weak ROI.

Feb 26, 2026

•AI

OpenClaw Ignored Stop Commands in Meta Researcher's Inbox

Summer Yue said OpenClaw deleted inbox emails despite a confirm-first instruction, highlighting AI agent guardrail and kill-switch risks.

Feb 21, 2026

•AI

AI Frenzy Makes High-Memory Macs Harder to Get and Pricier

OpenClaw-driven AI demand is outpacing supply: high-memory Macs now take weeks to ship, pushing buyers toward pricier configurations and longer delays.